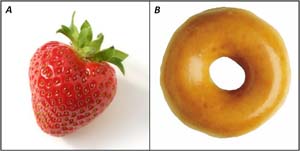

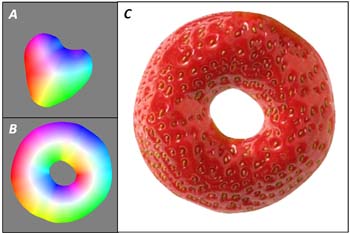

Suppose we wanted to take some complex material appearance – such as the juicy texture of the strawberry in Image A to the left – and recreate that appearance on a new shape – like the donut in Image B. How might we do it? Well, the obvious – and very difficult – way to go about it would be to gain a complete understanding of both images – the raw geometry, the reflectance, the illumination, the texture statistics – and then somehow put all those pieces to generate a new image. But extracting those quantities would be almost impossible with just these two images, and re-rendering the new image wouln't be a walk in the park either. Could there be an easier way?

It turns out there is. Our material transfer approach gets around many of the hairier steps in the synthesis approach by viewing the original image as nothing more than a two-dimensional texture. Of course, the behavior of the texture changes from one location in the image to another, but that behavior is linked to one very important thing: where you are in the shape. And how do you know where you are in the shape? With Puffball inflation. Inflating both the source and target silhouettes with Puffball imposes a field of surface normals on both shapes (see the figure to the right), and these surace normals correspond in a robust and intuitive way with where pixels lie relative to the shape: central or peripheral, near a left edge or near a right edge, etc. The Puffball surface normals tell you a pixel's location in the shape, and our material transfer algorithm is based on the hypothesis that more often than not, that location will give you all the information you need to generate a realistic-looking novel image. Our approach implements the material synthesis using the Puffball coordinates and a coarse-to-fine approach, illustrated in the video below. The result is shown in Figure C.